Last week’s lawless insurrection on Capitol Hill raised a series of questions about free speech, the First Amendment, and protest rights. Should presidents be banned from Twitter and Facebook? Should tech companies refuse to host social networks sites like Parler? What defines “hate speech” and “incitement?”

Here, PEN America offers a guide to these thorny issues. These debates are sure to continue to simmer over the weeks to come, and we’ll be updating this post periodically as events dictate.

Last updated: January 14, 2021

Was Twitter right to ban Trump?

There is no question that, under the First Amendment, Twitter has the right to police content on what is a private platform—the First Amendment protects speech from government intrusion, not from the actions of private actors. There is also little dispute that Trump’s many incendiary, dishonest, and hateful tweets violated the platform’s rules. But Twitter has asserted that speech by government leaders has unique value to the public, and has allowed such content and accounts to remain active even when they violate the rules. At PEN America, we supported that approach back in October of 2019 as the U.S. entered an election year in which we saw value in the public being able to see and evaluate an unvarnished Trump on Twitter.

The election is now behind us, and there’s clear evidence that Trump’s tweets have not only denigrated truthful accounts of democratic processes but also sown insurrection; as such, we think Twitter is justified in disabling his accounts. Overall though, the platform has a lot more work to do to ensure transparency and accountability in how it moderates content, to explain how special criteria for leaders on the platform are applied, and to ensure that users who believe their content has been unjustifiably suppressed have ready and adequate recourse to appeal.

Should Apple, Google, Amazon, and others disable Parler from their app stores and servers? What is Parler, anyway?

Parler is a Twitter-like social media platform that has become a favorite among conservatives who believe mainstream social media outlets like Facebook and Twitter are biased against them. But it has also played host to calls for insurrection and violence. According to the app stores and services that have suspended it, Parler has failed to apply its own content moderation policies in violation of their terms of service. Apple, Google, and Amazon are private companies, and they are free to apply their terms of service and choose whether or not they will host Parler on their app stores or servers. Whether they are doing so out of a genuine concern of the risk of violence, out of a corporate inclination toward risk avoidance, or some combination of both, is impossible to know.

That said, we are troubled by the prospect of providers of dominant internet services making spur-of-the-moment value judgments that affect entire platforms that host many speakers, many of whom may not be violating any rules. While the content adjudication mechanisms of Google, Facebook, Twitter, and other social media platforms remain deeply flawed, it is even less clear that companies like Amazon and Apple have staffing or policies that equip them to begin to come to grips with business decisions that may have sweeping ramifications for who can and cannot be heard in our public discourse.

When Zoom barred an individual speaker from using its platform because of her political views, we protested. Amazon, Apple, and Google have said that their ban on Parler is not about specific views being advanced, but rather the platform’s persistent failure to enforce its own policies barring hateful and inciting content. It is critical that those service providers spell out their policies and verification methods with respect to what they require of applications they host or otherwise support, and provide details on how Parler’s conduct fell short—and that they be able to do so in any such future cases. Otherwise, the risk is that all types of controversial speech could be summarily shut down by internet services that shy away from any reputational or political risk.

Was last week’s insurrection protected First Amendment activity?

The right to peaceful assembly is protected by the First Amendment. People who stood on the National Mall listening to President Trump and other speakers were within their rights, and march organizers had obtained a permit to walk toward the Capitol and sound their message. But the minute demonstrators began surmounting barricades, breaking windows to enter the Capitol, and resisting orders from police to retreat, a peaceful protest turned into a violent riot. By ignoring the directives of Capitol Police, threatening law enforcement officers and public officials, damaging property, unlawfully entering government premises, and looting objects, rioters engaged in conduct that fell far outside the scope of protected First Amendment speech.

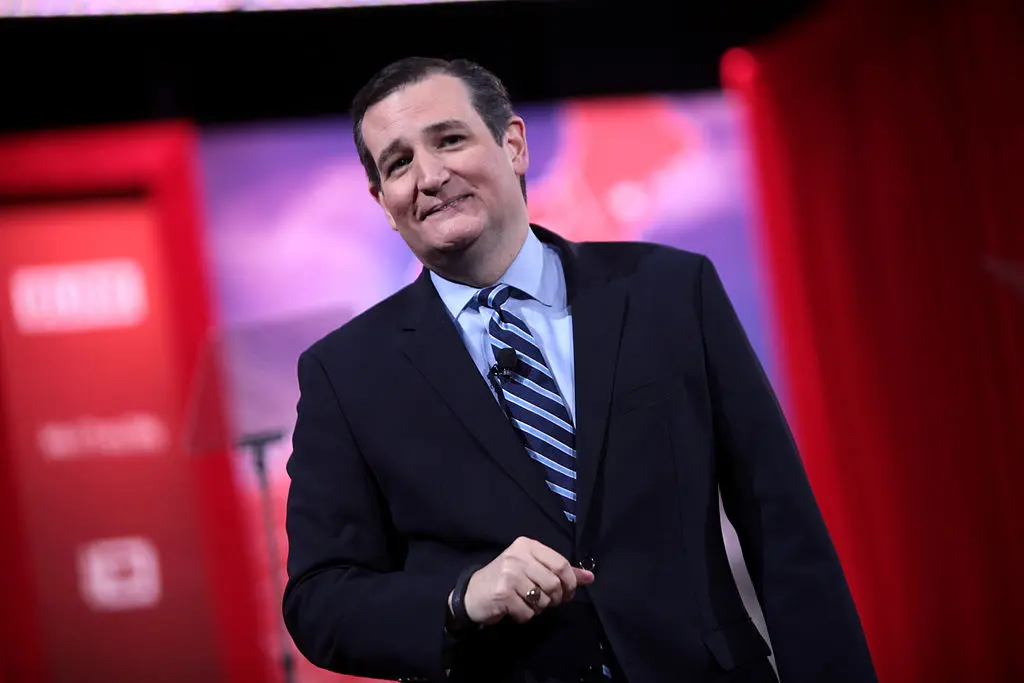

Do Trump’s tweets and speeches constitute incitement to violence?

As the term is colloquially used and understood, Trump’s language is inciting, meaning that it is likely to stoke lawless conduct. Incitement is among the short list of exceptions to the First Amendment, like libel, defamation, and true threats that can be punished notwithstanding the government’s obligation to uphold free speech. Analysts have collected troves of Trump’s statements and tweets that can be read to encourage and glorify extremism. And PEN America has long expressed concern about the dangers of Trump’s rhetoric and its potential to put people in danger, particularly members of the media.

Listening to his speech on the Mall and reading his tweets in the post-election period, it is hard to mistake Trump’s intention to stop at nothing to rally his followers to resist the verified outcome of the November presidential election. As a legal matter, however, the standard for incitement is very stringent and narrow, requiring intentional advocacy of violence, and that the violence be both imminent and likely. It is not clear whether or not Trump’s statements would satisfy that strict legal standard. There are also debates afoot among legal scholars about whether the definition of incitement should be adjusted to allow the law to better deal with dangerous rhetoric by a leader like Trump.

What is Section 230 of the Communications Decency Act, and why is it relevant to addressing online content that sows violence?

Section 230 of the U.S. legal code has been foundational to the development of our online world. It protects websites and platforms from Twitter and Facebook to The New York Times from liability for user-generated content, meaning they can’t be sued for posts that users create. Recently, members of both parties have called for its repeal for a variety of reasons. While “revoke 230” may make a good campaign slogan, scrapping it altogether would result in more users and content being removed, in the concentration of power in the hands of larger platforms, and in a less participatory internet overall.

PEN America recommends that lawmakers take on the challenge of developing a surgical approach that creates more responsibility for tech companies but doesn’t push them to err on the side of removing controversial content en masse in order to avert any risk of liability.

What’s the line that defines hate speech versus very strongly expressed and enraged opinion?

There is no clear legal line in the United States between hateful speech and incendiary opinions. In fact, the term “hate speech” has no legal definition and is used to refer to all sorts of things—including clearly illegal speech like a direct threat to kill someone, as well as offensive or insensitive speech. In order to fall outside the protections of the First Amendment, speech needs to fit into one of a series of narrowly defined categories of exception, including incitement to imminent violence, true threats, false advertising, and several other types of speech that courts have found to be so harmful that the government is permitted to ban or punish them.

Does law enforcement treat demonstrations differently depending on who is doing the protesting and for what cause?

Notwithstanding the First Amendment’s edict against government entities discriminating on the basis of viewpoint in terms of how speech is policed, there is evidence that authorities at various levels apply different standards based on the types of protests at issue and who is taking part in them.

In a report issued in May 2020, PEN America documented dozens of proposals introduced and adopted within states to stiffen restrictions on protests in the wake of demonstrations for Black Lives Matter, against oil pipelines, and on behalf of women’s, immigrants’, and environmental rights. Additional research shows that authorities are “more than twice as likely to break up a left-wing protest as a right-wing protest.” The sharp differences between policing tactics used in Washington, D.C. during last summer’s racial justice protests as compared to the siege at the Capitol during the first week of January raise serious questions about whether law enforcement took a different approach because of perceptions of the respective events and their participants. An investigation is warranted to determine what role the race of participants, their politics, and other impermissible factors may have played in shaping how these events were policed.

Is it right for Twitter to shut down 70,000 QAnon-related accounts? What about Facebook blocking #StopTheSteal? Don’t people have a right to believe what they want, even falsehoods and conspiracy theories?

It’s fair to say that the platforms find themselves in a sort of “social media state of emergency” and that they have deemed especially rapid and broad action necessary to prevent further unrest of the type seen at the Capitol. As discussed above, Facebook, Twitter, and other private companies are free to create and enforce their own policies. That being said, the question of where justified removals end and censorship begins is not cut and dry, and in emergencies, free expression and other human rights tend to get disregarded in the name of security, sometimes as a limited, legitimate response to a specific threat—and sometimes not. There is real reason to be concerned about new norms being set that won’t serve democracy well after the dust settles.

Even granting that shutting down these accounts and hashtags is reasonable in this moment to prevent further violence, there is always collateral damage from such large-scale sweeps. For example, the brief suspension of the community organizing group People’s City Council LA’s Twitter account this week raised questions of whether they had been erroneously swept up in the larger crackdown.

These approaches can also provide “moderation theater”—a performative response that appears incisive but is really just a game of whack-a-mole. In the wake of the shutdown of Parler, its competitor Gab reports a significant uptick in new account registrations. There also appears to be migration of these conversations to email, text, and encrypted messaging apps. So there is a question of how effective these measures are in reducing the platform for these conversations or in inhibiting the ability of groups to coordinate. We cannot permit coordination of violence and insurrection—online or off. But distinguishing that from protected speech can prove tricky, and triumphant reports that tens of thousands of botnet or conspiracy accounts have been removed should give us pause not only because of the risk of collateral damage to free expression but because reactive mass removals won’t get to the root of the problem anyway. It is important, therefore, that groups such as PEN America and the public in general monitor and carefully consider such steps and respond where appropriate. In keeping with the idea that we are in an emergency situation, platforms should not simply set these new policies in stone, but rather should continually revisit and update as conditions evolve.